NORAA

NORAA kinematics design development

NORAA design development

NORAA QuickDraw + SketchRNN development

NORAA [Machinic Doodles]

Machinic Doodles is a live, interactive drawing installation that facilitates collaboration between a human and a robot named NORAA, a machine that is learning how to draw. It explores how we communicate ideas through the strokes of a drawing, and how might a machine also be taught to draw through learning, instead of via pre-programmed, explicit instruction.

While simple pen strokes may not resemble reality as captured by more sophisticated visual representations, they do tell us something about how people represent and reconstruct the world around them. The ability to immediately recognise and depict objects and even emotions from a few marks, strokes and lines, is something that humans learn as children.

Machinic Doodles is interested in the semantics of lines, the patterns that emerge in how people around the world draw – what governs the rule of geometry that makes us draw from one point to another in a specific order? The order, speed-pace and expression of a line, its constructed and semantic associations are of primary interest, generated figures are simply the means and the record of the interaction, not the final motivation.

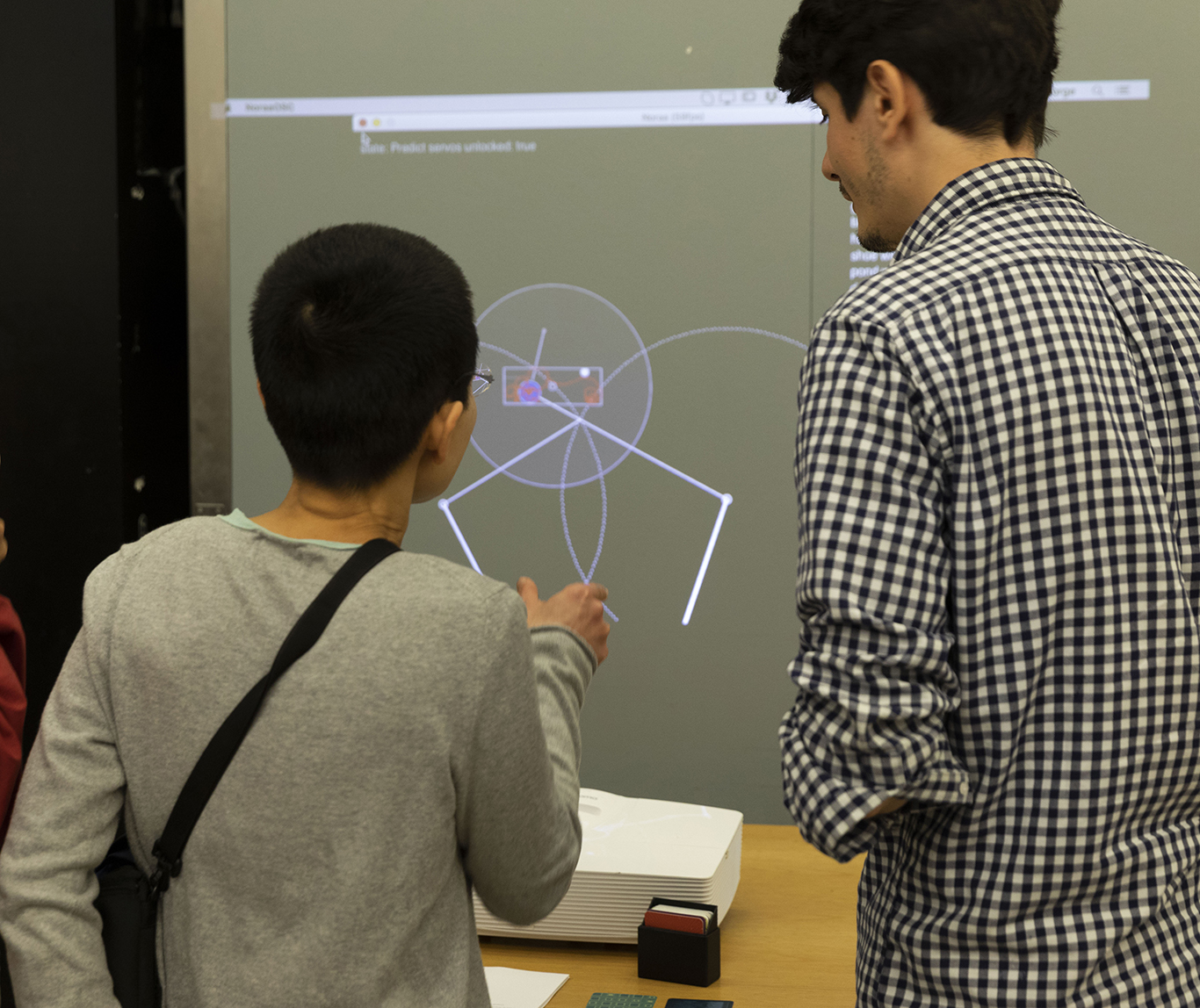

The installation is essentially a game of human-robot Pictionary: you draw, the machine takes a guess, and then draws something back in response. The project demonstrates how a drawing game based on a recurrent neural network, combined with real-time human drawing interaction, can be used to generate a sequence of human-machine doodle drawings. As the number of classification models is greater than the generational models (i.e. ability to classify is higher than drawing ability), the work inherently explores this gap in the machine’s knowledge, as well as the creative possibilities afforded by the misinterpretations of the machine. Drawings are not just for guessing, but analysed for spatial and temporal characteristics to inform drawing generation.

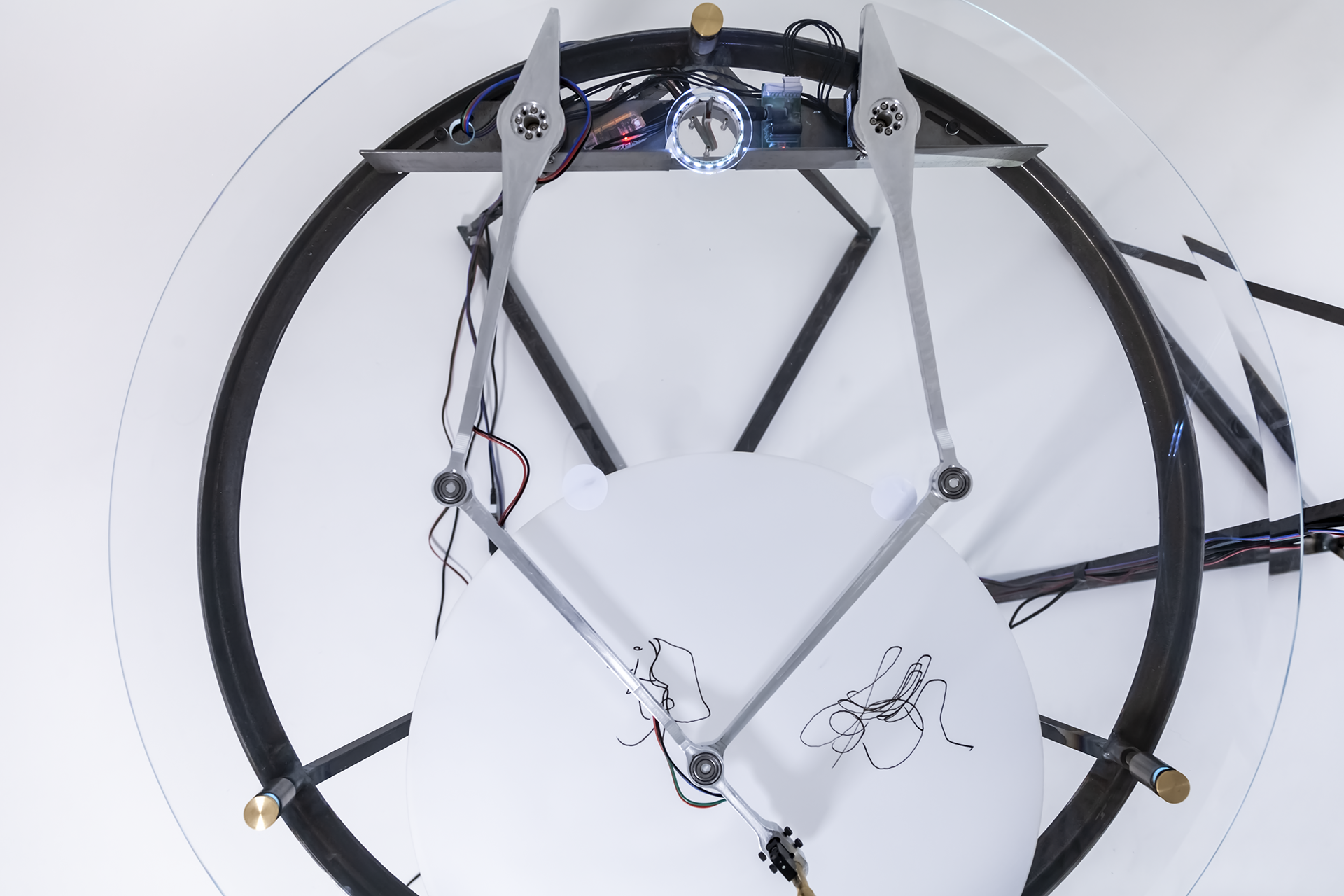

An interesting aspect of the work is that NORAA is visually ‘blind’ in the sense that there is no camera, no image analysis – the drawings are encoded by the machine through a movement and sequence based approach (recording the motor rotations over time, along with timestamps for the pen up/down switch). The motor angle recordings are then translated to stroke data through the kinematics of the mechanism, before being sent to the drawing classifier. In the case of no direct match between classification and generation, an alternative generative model is chosen and drawn by the machine.

…

NORAA utilises the Google QuickDraw dataset, the QuickDraw Classifier, and custom trained models for SketchRNN. Main software interface built with Processing (interaction + drawing kinematics); Python (actuator communication); and Node.js (QuickDraw + SketchRNN communication);

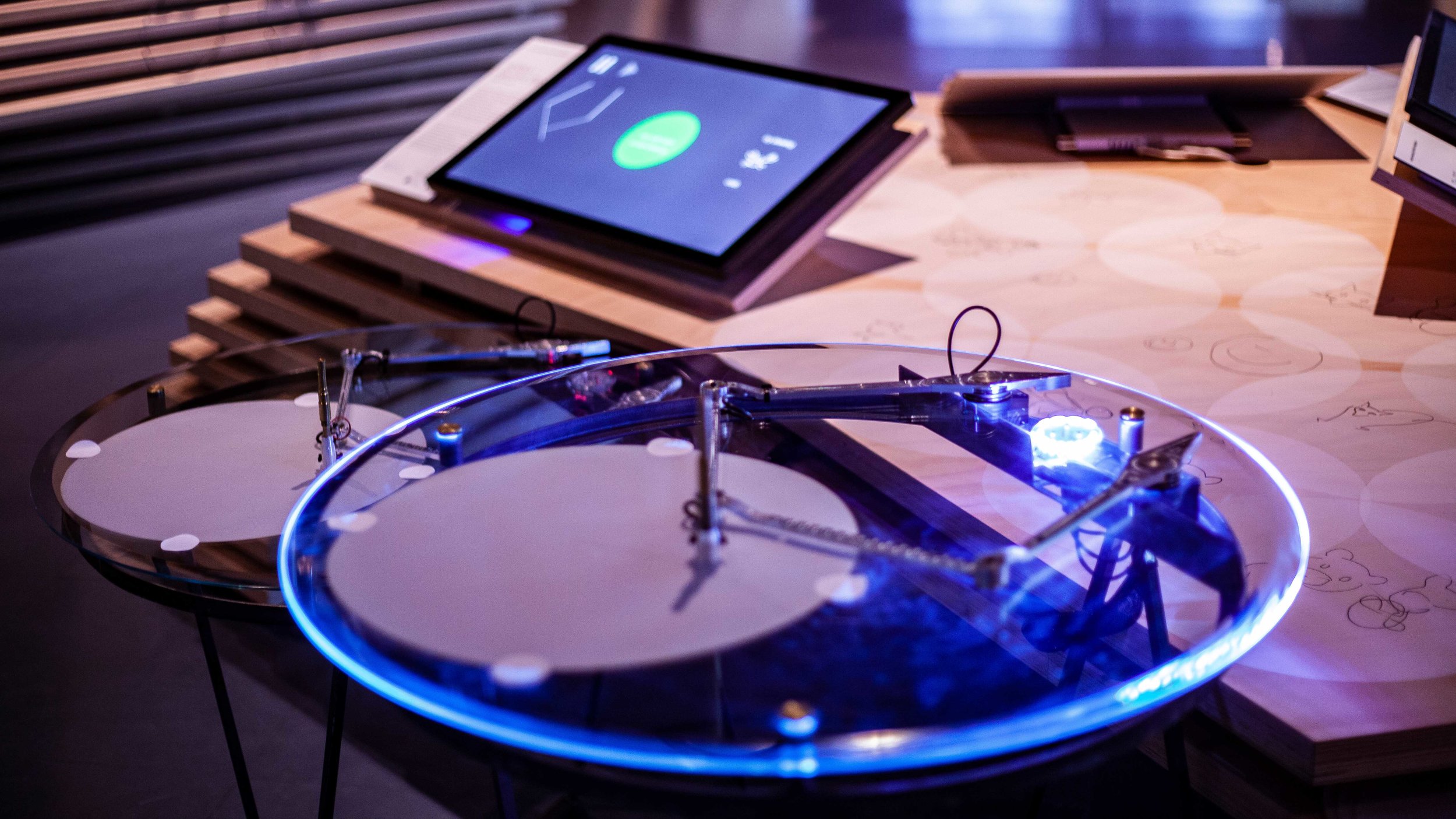

The physical machine is a bespoke drawing mechanism comprising of custom machined aluminum arms + pen apparatus, Dynamixel smart actuators, interactive lighting elements, bespoke glass + steel stands.

…

NORAA is currently live at the new Ars Electronica Center’s year long exhibition Compass: Navigating the Future / Understanding AI. The installation allows members of the public to draw with NORAA, and to contribute drawings to the exhibition.

…

NORAA was first exhibited as a live, interactive drawing game installation: 'Machinic Doodles' at the V&A Museum’s Digital Design Weekend 2018.

...

Project team: Jessica In, George Profenza @sensori.al + Sam Price @mr_ribena.

...

Thank you: James McVay, Krina Christopoulou, Naomi Lea, Ed Taft, Jess Chidester. Thank you also to IALab @interactivearchitecturelab, Ruairi Glynn @ruairiglynn, Sean Malikides @oldfortune_ for their advice and support. Irini Papadimitriou, Atau Tanaka, Theo Papatheodorou from Goldsmiths + V&A. Stephan Feichter + Kristina Maurer + Ars Electronica Center Linz.

...

Project made possible through the Bartlett APF Grants, exhibition at Digital Design Weekend through Goldsmiths Computational Arts Residency.

...

Machinic Doodles is a live, interactive drawing installation that facilitates collaboration between a human and a robot named NORAA - a machine that is learning how to draw. It explores how we communicate ideas through the strokes of a drawing, and how might a machine also be taught to draw through learning, instead of via explicit instruction.

Machinic Doodles / NORAA live at the Ars Electronica Center’s new year long exhibition Understanding AI, Compass: Navigating the Future.

Machinic Doodles / NORAA live at the Victoria and Albert Museum's Digital Design Weekend, 22-23rd September 2018

Machinic Doodles / NORAA Live at the Victoria and Albert Museum's Digital Design Weekend, 22-23rd September 2018

NORAA Choreography Drawing